In the fall of 1973, the University of California, Berkeley released its graduate admissions statistics, immediately sparking an uproar: 44% of male applicants were admitted, compared to only 35% of female applicants. Was this ironclad proof of gender discrimination? When researchers delved into the data by individual departments, they discovered a stunning fact — in most departments, women actually had higher admission rates. This is one of the most confounding yet important phenomena in statistics: Simpson's Paradox.

1. What Is Simpson's Paradox?

Defining the Paradox

Simpson's Paradox is a statistical phenomenon in which a trend observed in grouped data may disappear or even reverse when the data is combined.[1] In other words, when we examine data in separate groups we arrive at one conclusion, but when we look at the combined data we reach the exact opposite conclusion.

This is neither a mathematical error nor a statistical fallacy — it is a genuine mathematical phenomenon that reveals the dangerous pitfalls potentially hidden within aggregated data.

Mathematical Explanation: The Weighted Average Trap

At the core of Simpson's Paradox lies the counterintuitive nature of weighted averages. Let us illustrate this mathematically.

Suppose we compare the success rates of two groups, A and B, across two subcategories, 1 and 2:

- Group A's success rate in Category 1: pA1, sample size nA1

- Group A's success rate in Category 2: pA2, sample size nA2

- Group B's success rate in Category 1: pB1, sample size nB1

- Group B's success rate in Category 2: pB2, sample size nB2

Even if pA1 > pB1 and pA2 > pB2 (A outperforms B in both categories), the combined overall success rate may still show:

PA = (nA1 · pA1 + nA2 · pA2) / (nA1 + nA2) < PB = (nB1 · pB1 + nB2 · pB2) / (nB1 + nB2)

The key lies in the asymmetry of sample distribution: if a large proportion of A's samples are concentrated in the category with a lower success rate, while B's samples are concentrated in the category with a higher success rate, then even if A performs better in every individual category, the overall weighted result may still favor B.[2]

2. Classic Cases

Case 1: The UC Berkeley 1973 Admissions Controversy

This is the most famous instance of Simpson's Paradox and an essential case in statistics textbooks.[3]

Surface Data: "Ironclad Proof" of Gender Discrimination

| Gender | Applicants | Admitted | Admission Rate |

|---|---|---|---|

| Male | 8,442 | 3,738 | 44% |

| Female | 4,321 | 1,512 | 35% |

It certainly appeared that serious gender discrimination existed — male admission rates exceeded female rates by 9 percentage points!

Deeper Analysis: The Truth Revealed

However, when statisticians Peter Bickel, Eugene Hammel, and J. William O'Connell examined the data department by department, an entirely different picture emerged. Below is simplified data from two departments:

| Department | Gender | Applicants | Admitted | Admission Rate |

|---|---|---|---|---|

| Engineering | Male | 825 | 511 | 62% |

| Female | 108 | 89 | 82% | |

| English | Male | 373 | 22 | 6% |

| Female | 341 | 24 | 7% |

In the Engineering department, the female admission rate (82%) was far higher than the male rate (62%)! In the English department, the female rate (7%) also slightly exceeded the male rate (6%). In fact, across the majority of the 85 departments, female admission rates were equal to or higher than male rates.

The Cause of the Paradox

So why did the overall data suggest higher male admission rates? The answer lies in the difference in application distribution:

- Women tended to apply to highly competitive departments with lower admission rates (such as humanities and social sciences)

- Men tended to apply to departments with higher admission rates (such as engineering and natural sciences)

This difference in application patterns — rather than admissions discrimination — was the primary driver of the overall gap in admission rates.[4]

🎮 Try It: Simpson's Paradox Simulator

Adjust the parameters below to experience firsthand how Simpson's Paradox occurs. Default values simulate the UC Berkeley scenario.

📚 Department A (Engineering)

📖 Department B (Liberal Arts)

📊 Results

Department A

Department B

🏫 Overall Admission Rate

Females have higher admission rates in both departments, yet have a lower overall admission rate!

The group-level trend is consistent with the overall trend. Try adjusting the parameters to create a paradox!

💡 Why Does This Happen?

Case 2: LeBron James vs. Karl Malone

Basketball statistics provide another excellent demonstration of Simpson's Paradox. Let us compare the shooting percentages of two NBA legends.

Category-Level Data

| Player | 2-Point FG% | 3-Point FG% |

|---|---|---|

| LeBron James | 54.7% | 34.6% |

| Karl Malone | 52.3% | 27.4% |

Whether looking at two-point or three-point field goals, LeBron's shooting percentage is higher than Malone's. Intuitively, LeBron should have a higher overall shooting percentage, right?

The Reversal in Overall Data

However, when we calculate the overall field goal percentage:

- LeBron James: approximately 50.4%

- Karl Malone: approximately 51.6%

Malone actually has the higher overall percentage!

Analysis of the Cause

The key lies in the shot distribution:

- LeBron's three-point attempts account for a much larger proportion of his total shots compared to Malone

- Three-point shots inherently have a lower success rate than two-point shots

- Therefore, even though LeBron is more accurate in both shot types, the high volume of lower-percentage three-point attempts drags down his overall numbers

This example vividly illustrates that a single aggregate figure can seriously distort the truth. When evaluating player performance, one must account for the structural differences in shot selection.

Case 3: Kidney Stone Treatment

Cases of Simpson's Paradox in medicine are particularly concerning because they directly affect patient health and even survival.

The Classic 1986 Study

Charig et al. published a study in 1986 comparing two methods of kidney stone treatment: traditional open surgery (Treatment A) and minimally invasive percutaneous nephrolithotomy (Treatment B).[5]

| Treatment | Overall Success Rate |

|---|---|

| Open Surgery (A) | 78% (273/350) |

| Minimally Invasive (B) | 83% (289/350) |

On the surface, minimally invasive surgery (B) appeared more effective. However, when the data was stratified by kidney stone size:

| Stone Size | Open Surgery (A) | Minimally Invasive (B) |

|---|---|---|

| Small Stones (<2cm) | 93% (81/87) | 87% (234/270) |

| Large Stones (≥2cm) | 73% (192/263) | 69% (55/80) |

Regardless of stone size, open surgery had a higher success rate! The paradox arose because doctors tended to assign less severe cases (small stones) to minimally invasive surgery and more severe cases (large stones) to traditional open surgery. This allocation bias caused the reversal in overall data.

3. Additional Examples

Baseball Batting Averages

Baseball statistics are a breeding ground for Simpson's Paradox. The most famous example involves the 1995-1996 batting average competition between Derek Jeter and David Justice.[6]

In both 1995 and 1996, Justice had a higher batting average than Jeter in each individual year. Yet when combining the data from both years, Jeter's overall batting average was actually higher! The reason was that Jeter had far more at-bats in 1996, the year in which he performed better, while Justice's at-bats were distributed in the opposite pattern.

COVID-19 Vaccine Efficacy Data

In 2021, COVID-19 data from Israel sparked questions from vaccine skeptics: in certain statistics, the mortality rate among vaccinated individuals appeared higher than among the unvaccinated![7]

However, this was a classic manifestation of Simpson's Paradox. Because Israel's vaccination rate was extremely high (especially among the elderly), a large number of older individuals were vaccinated. Since older people inherently have a higher baseline mortality risk, the unadjusted data made it appear that more vaccinated individuals were dying.

When the data was analyzed by age group, the truth emerged: within every age group, vaccinated individuals had significantly lower mortality rates than the unvaccinated.

Death Penalty Sentencing and Race

In 1983, Radelet studied death penalty sentencing data in Florida and found disturbing racial disparities.[8]

The aggregate data showed that killers of white victims were more likely to receive the death penalty than killers of Black victims. This seemed to suggest racial bias toward victims. However, when the data was stratified by the race of the perpetrator, white and Black perpetrators showed different patterns in death penalty rates across subgroups. The complexity of this case lies in the fact that deciding which stratification to use for interpreting data is itself a value judgment.

4. Historical Origins

Karl Pearson (1899): The Earliest Observation

The history of Simpson's Paradox predates the name "Simpson" itself.

Karl Pearson (1857-1936), one of the founders of modern statistics, noticed a similar phenomenon as early as 1899 while studying heredity and natural selection.[9] He found that when data came from different subgroups, the correlation after merging could run opposite to the correlations within each subgroup.

Udny Yule (1903): The Confounding Effect

George Udny Yule (1871-1951) analyzed this problem more systematically in 1903.[10] He pointed out that when a "latent variable" exists, the apparent association between two variables can be spurious. This concept later came to be known as the "Yule Effect" or "confounding."

Edward Simpson (1951): The Formal Naming

Edward H. Simpson (1922-2019) was a British statistician who formally described this paradox in a paper published in 1951.[11]

Interestingly, Simpson himself was surprised that the paradox was named after him. In an interview, he modestly remarked: "I merely wrote down what was already known."[12] Nevertheless, his paper was the first to present the phenomenon in a clear and concise academic format, and the name "Simpson's Paradox" gradually became established.

Judea Pearl: The Causal Revolution

Judea Pearl (1936-), the 2011 Turing Award laureate, elevated Simpson's Paradox to an entirely new philosophical level.[13]

Pearl argued that Simpson's Paradox is not merely a statistical problem but a problem of causal inference. He stated: "No purely statistical criterion can tell us when to aggregate data and when to stratify. The answer depends on our understanding of the causal structure."

Pearl developed causal diagrams and do-calculus, providing a mathematical framework for resolving Simpson's Paradox. In his theory, whether a variable should be controlled for depends on its position in the causal network — whether it is a confounder, a mediator, or a collider.[14]

5. Why Does It Happen?

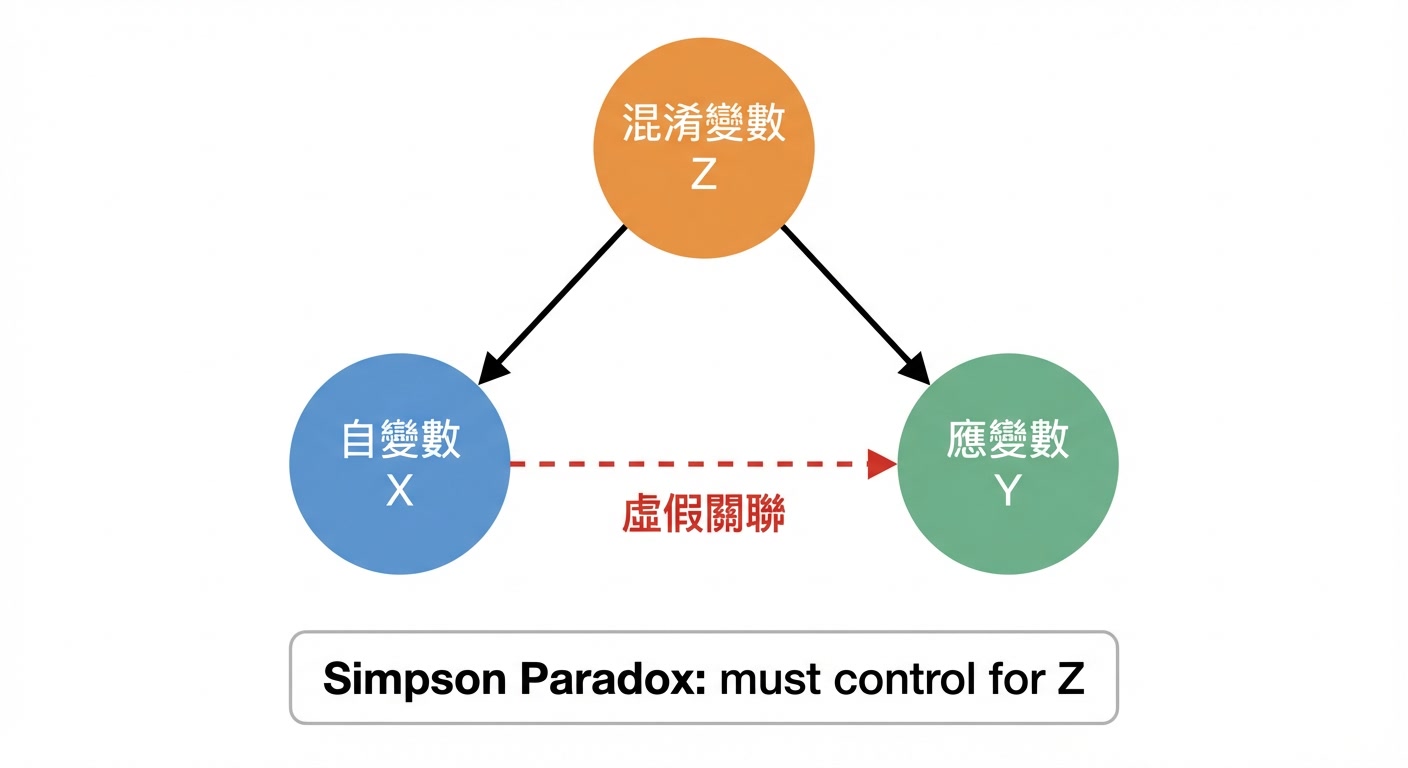

Confounding Variables

The most common cause of Simpson's Paradox is the presence of a confounding variable. A confounding variable is a third factor that simultaneously influences both the independent variable and the dependent variable.

Using the UC Berkeley case as an example:

- Independent variable: the applicant's gender

- Dependent variable: admission outcome

- Confounding variable: the department applied to

Because gender influenced department choice (women tended to apply to highly competitive departments), and departments in turn influenced admission rates, simply comparing overall male and female admission rates produced misleading results.

Base Rate Effect

Another key factor is the asymmetry in sample sizes across groups. Even if A outperforms B in every subgroup, if A's samples are disproportionately concentrated in the "low base rate" group while B's samples are concentrated in the "high base rate" group, B may still prevail in the overall weighted result.

Think of it this way: one student scores 90 on both quizzes, while another scores 80. But if the first student's quizzes count for only 10% of the total grade and the major exam (where they scored 60) counts for 90%, while the second student's quizzes count for 90% and the major exam (where they scored 70) counts for 10%, then the second student ends up with a higher overall grade.

6. How to Avoid Being Misled

1. Always Ask About the Structure Behind the Data

When confronted with statistical data, do not simply look at the bottom line. Ask:

- How was this data aggregated?

- Are there hidden groupings?

- Are the sample sizes across groups comparable?

2. Build a Causal Model

Pearl's recommendation: before analyzing data, draw a diagram of what you believe the causal relationships to be. This helps identify which variables are confounders, which should be controlled for, and which should not.

3. Stratified Analysis

When confounding variables are suspected, conducting a stratified analysis is standard practice. But remember: the basis for stratification should rest on causal reasoning, not merely statistical convenience.

4. Be Wary of Political Uses of Aggregate Data

Simpson's Paradox is frequently exploited by politicians and interest groups to support their positions. Understanding this paradox can help you recognize such manipulation.[15]

7. The Importance of Data Literacy

In this data-driven era, the lessons of Simpson's Paradox are more important than ever.

Implications for Individuals

Every time you see a news report citing statistics to support a particular argument, pause and ask yourself:

- Has this data been analyzed by subgroup?

- Could the overall trend be an illusion?

- Are there overlooked confounding variables?

Implications for Professionals

For data analysts, researchers, and decision-makers, understanding Simpson's Paradox is a fundamental competency. When publishing research findings or making decisions, one must consider:

- Is my method of data aggregation appropriate?

- Have I conducted the necessary stratified analyses?

- Do my conclusions withstand scrutiny from a causal inference perspective?

Implications for Society

Simpson's Paradox reminds us that data does not speak for itself — data must be interpreted correctly. In public policy, medical decision-making, and social issues, we cannot blindly trust statistical figures but must cultivate critical data thinking.

Conclusion: Humility Before Data

Simpson's Paradox is more than just a fascinating mathematical phenomenon — it is a wake-up call for human cognition.

It tells us that surface-level patterns may be illusory. When we aggregate data, we may be creating trends that do not actually exist.

It reminds us that statistics cannot substitute for causal thinking. Data can only tell us "what is," not "why." Understanding causal relationships requires thinking beyond the data itself.

It teaches us to maintain humility before data. Even the most precise numbers can deceive when aggregated inappropriately. We should maintain a healthy skepticism toward statistical conclusions.

From Pearson's initial observation in 1899, to Simpson's formal description in 1951, to Pearl's causal revolution — this academic journey spanning over a century ultimately points to a profound insight: understanding the world requires not only data, but also causal knowledge about how that data was generated.

The next time you encounter a surprising statistic, remember the UC Berkeley story, the shooting records of LeBron and Malone, and the treatment outcomes of those kidney stone patients. Ask yourself: could a Simpson's Paradox be hiding behind this data?

References

- Simpson, E. H. (1951). "The interpretation of interaction in contingency tables." Journal of the Royal Statistical Society, Series B, 13(2), 238-241.

- Pearl, J. (2014). "Comment: Understanding Simpson's Paradox." The American Statistician, 68(1), 8-13.

- Bickel, P. J., Hammel, E. A., & O'Connell, J. W. (1975). "Sex Bias in Graduate Admissions: Data from Berkeley." Science, 187(4175), 398-404.

- Freedman, D., Pisani, R., & Purves, R. (2007). Statistics (4th ed.). W. W. Norton & Company, Chapter 2.

- Charig, C. R., Webb, D. R., Payne, S. R., & Wickham, J. E. A. (1986). "Comparison of treatment of renal calculi by open surgery, percutaneous nephrolithotomy, and extracorporeal shockwave lithotripsy." British Medical Journal, 292(6524), 879-882.

- Samuels, M. L. (1993). "Simpson's Paradox and Related Phenomena." Journal of the American Statistical Association, 88(421), 81-88.

- Morris, J. A. (2021). "Simpson's Paradox and COVID-19 vaccine efficacy." The BMJ, 374:n1912.

- Radelet, M. L. (1981). "Racial Characteristics and the Imposition of the Death Penalty." American Sociological Review, 46(6), 918-927.

- Pearson, K., Lee, A., & Bramley-Moore, L. (1899). "Mathematical contributions to the theory of evolution. VI. Genetic (reproductive) selection." Philosophical Transactions of the Royal Society of London. Series A, 192, 257-330.

- Yule, G. U. (1903). "Notes on the theory of association of attributes in statistics." Biometrika, 2(2), 121-134.

- Simpson, E. H. (1951). "The interpretation of interaction in contingency tables." Journal of the Royal Statistical Society, Series B, 13(2), 238-241.

- Wagner, C. H. (1982). "Simpson's Paradox in Real Life." The American Statistician, 36(1), 46-48.

- Pearl, J. (2009). Causality: Models, Reasoning, and Inference (2nd ed.). Cambridge University Press.

- Pearl, J., & Mackenzie, D. (2018). The Book of Why: The New Science of Cause and Effect. Basic Books.

- Hernán, M. A., Clayton, D., & Keiding, N. (2011). "The Simpson's paradox unraveled." International Journal of Epidemiology, 40(3), 780-785.